|

Motion Capture is the process of analyzing movements of objects or humans from video data. Potential application fields are animation for 3D-movie production, sports science and medical applications. Instead of using artificial markers attached to the body and expensive lab equipment we are interested in tracking humans from video streams without special preparation of the subject. This is even more challenging in the context of outdoor scenes, clothed people and people interaction. |

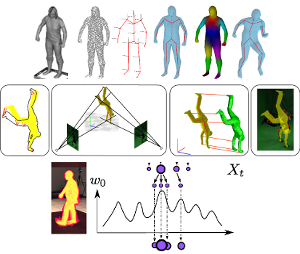

Model Based Pose Estimationin Visual Analysis of Humans: Looking at People, Springer, June 2011Gerard Pons-Moll, and Bodo Rosenhahn Abstract: Model-based pose estimation algorithms aim at recovering human motion from one or more camera views and a 3D model representation of the human body. The model pose is usually parameterized with a kinematic chain and thereby the pose is represented by a vector of joint angles. The majority of algorithms are based on minimizing an error function that measures how well the 3D model fits the im- age. This category of algorithms usually have two main stages, namely defining the model and fitting the model to image observations. In the first section, the reader is introduced to the different kinematic parametrization of human motion. In the second section, the most commonly used representations of human shape are described. The third section is dedicated to the description of different error functions proposed in the literature and on common optimization techniques used for human pose esti- mation. Specifically, local optimization and particle based optimization and filtering are discussed and compared. The chapter concludes with a discussion of the state- of-the-art in model-based pose estimation, current limitations and future directions. Links:

|

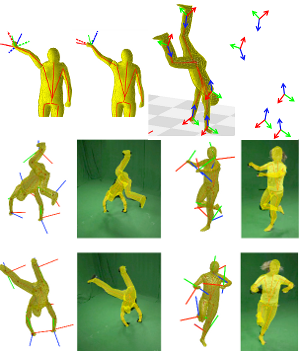

Outdoor Human Motion Capture using Inverse Kinematics and von Mises-Fisher SamplingIEEE International Conference on Computer Vision (ICCV 2011)Gerard Pons-Moll, Andreas Baak, Juergen Gall, Laura Leal-Taixe, Abstract: Human motion capturing (HMC) from multiview image sequences constitutes an extremely difficult problem due to depth and orientation ambiguities and the high dimensionality of the state space. In this paper, we introduce a novel hybrid HMC system that combines video input with sparse inertial sensor input. Employing an annealing particle-based optimization scheme, our idea is to use orientation cues derived from the inertial input to sample particles from the manifold of valid poses. Then, visual cues derived from the video input are used to weight these particles and to iteratively derive the final pose. As our main contribution, we propose an efficient sampling procedure where hypothesis are derived analytically using state decomposition and inverse kinematics on the orientation cues. Additionally, we introduce a novel sensor noise model to account for uncertainties based on the von Mises-Fisher distribution. Doing so, orientation constraints are naturally fulfilled and the number of needed particles can be kept very small. More generally, our method can be used to sample poses that fulfill arbitrary orientation or positional kinematic constraints. In the experiments, we show that our system can track even highly dynamic motions in an outdoor setting with changing illumination, background clutter, and shadows. Links:

|

Efficient and Robust Shape Matching for Model Based Human Motion Capture33rd Annual Symposium of the German Association for Pattern Recognition (DAGM 2011)Gerard Pons-Moll, Laura Leal-Taixe Tri Truong and Bodo Rosenhahn Abstract: In this paper we present a robust and efficient shape matching approach for Marker-less Motion Capture. Extracted features such as contour, gradient orientations and the turning function of the shape are embedded in a 1-D string. We formulate shape matching as a Linear Assignment Problem and pro- pose to use Dynamic Time Warping on the string representation of shapes to discard unlikely correspondences and thereby to reduce ambiguities and spurious local minima. Furthermore, the proposed cost matrix pruning results in robustness to scaling, rotation and topological changes and allows to greatly reduce the computational cost. We show that our approach can track fast human motions where standard articulated Iterative Closest Point algorithms fail. Links:

|

Multisensor-Fusion for 3D Full-Body Human Motion CaptureIEEE Conference on Computer Vision and Pattern Recognition (CVPR 2010)Gerard Pons-Moll, Andreas Baak, Thomas Helten, Meinard Müller, Abstract: In this work, we present an approach to fuse video with orientation data obtained from extended inertial sensors to improve and stabilize full-body human motion capture. Even though video data is a strong cue for motion analysis, tracking artifacts occur frequently due to ambiguities in the images, rapid motions, occlusions or noise. As a complementary data source, inertial sensors allow for drift- free estimation of limb orientations even under fast motions. However, accurate position information cannot be obtained in continuous operation. Therefore, we propose a hybrid tracker that combines video with a small number of inertial units to compensate for the drawbacks of each sensor type: on the one hand, we obtain drift-free and accurate position information from video data and, on the other hand, we obtain accurate limb orientations and good performance under fast motions from inertial sensors. In several experiments we demonstrate the increased performance and stability of our human motion tracker. Links:

|

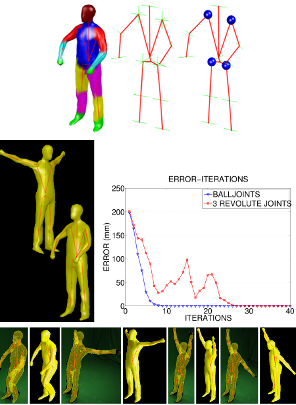

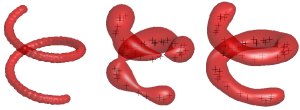

Ball Joints for Marker-less Human Motion CaptureIEEE Workshop on Applications for Computer Vision (WACV 2009) of the WVMGerard Pons-Moll, and Bodo Rosenhahn Abstract: This work presents an approach for the modeling and numerical optimization of ball joints within a Marker-less Motion Capture (MoCap) framework. In skeleton based ap- proaches, kinematic chains are commonly used to model 1 DoF revolute joints. A 3 DoF joint (e.g. a shoulder or hip) is consequently modeled by concatenating three consecutive 1 DoF revolute joints. Obviously such a representation is not optimal and singularities can occur. Therefore, we pro- pose to model 3 DoF joints with spherical joints or ball joints using the representation of a twist and its exponential mapping (known from 1 DoF revolute joints). The exact modeling and numerical optimization of ball joints requires additionally the adjoint transform and the logarithm of the exponential mapping. Experiments with simulated and real data demonstrate that ball joints can better represent arbi- trary rotations than the concatenation of 3 revolute joints. Moreover, we demonstrate that the 3 revolute joints repre- sentation is very similar to the Euler angles representation and has the same limitations in terms of singularities. Links:

|

Learning Skeletons for Shape and PoseACM SIGGRAPH Symposium on Interactive 3D Graphics and GamesNils Hasler, Thorsten Thormaelen, Bodo Rosenhahn and Hans-Peter Seidel Abstract: In this paper a method for estimating a rigid skeleton, including skinning weights, skeleton connectivity, and joint positions, given a sparse set of example poses is presented. In contrast to other methods, we are able to simultaneously take examples of different subjects into account, which improves the robustness of the estima- tion. It is additionally possible to generate a skeleton that primar- ily describes variations in body shape instead of pose. The shape skeleton can then be combined with a regular pose varying skeleton. That way pose and body shape can be controlled simultaneously but separately. As this skeleton is technically still just a skinned rigid skeleton, compatibility with major modelling packages and game engines is retained. We further present an approach for synthesiz- ing a suitable bind shape that additionally improves the accuracy of the generated model. Links:

|

Markerless Motion Capture with Unsynchronized Moving CamerasIEEE Conference on Computer Vision and Pattern Recognition (CVPR 2009)Nils Hasler, Bodo Rosenhahn, Thorsten Thormaelen, Michael Wand, Juergen Gall and Hans-Peter Seidel Abstract: In this work we present an approach for markerless motion capture (MoCap) of articulated objects, which are recorded with multiple unsynchronized moving cameras. Instead of using fixed (and expensive) hardware synchronized cameras, this approach allows us to track people with off-the-shelf handheld video cameras. To prepare a sequence for motion capture, we first reconstruct the static background and the position of each camera using Structure-from-Motion (SfM). Then the cameras are registered to each other using the reconstructed static back- ground geometry. Camera synchronization is achieved via the audio streams recorded by the cameras in parallel. Finally, a markerless MoCap approach is applied to re- cover positions and joint configurations of subjects. Feature tracks and dense background geometry are further used to stabilize the MoCap. The experiments show examples with highly challenging indoor and outdoor scenes. Links:

|

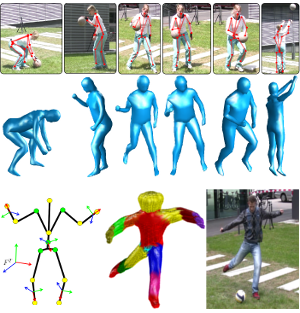

Markerless Motion Capture for Man-Machine InteractionIEEE Conference on Computer Vision and Pattern Recognition (CVPR 2008)Bodo Rosenhahn, Christian Schmaltz, Thomas Brox, Joachim Weickert, Daniel Cremers and Hans-Peter Seidel Abstract: This work deals with modeling and markerless tracking of athletes interacting with sports gear. In contrast to classical markerless tracking, the interaction with sports gear comes along with joint movement restrictions due to additional constraints: while humans can generally use all their joints, interaction with the equipment imposes a coupling between certain joints. A cyclist who performs a cycling pattern is one example: The feet are supposed to stay on the pedals, which are again restricted to move along a circular trajectory in 3D-space. In this paper, we present a markerless motion capture system that takes the lower- dimensional pose manifold into account by modeling the motion restrictions via soft constraints during pose optimization. Experiments with two different models, a cyclist and a snowboarder, demonstrate the applicability of the method. Moreover, we present motion capture results for challenging outdoor scenes including shadows and strong illumination changes. Links:

|

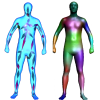

A Statistical Model of Human Pose and Body ShapeEurographics 2009Nils Hasler, Carsten Stoll, M. Sunkel, Bodo Rosenhahn and Hans-Peter Seidel Abstract: Abstract Generation and animation of realistic humans is an essential part of many projects in today’s media industry. Especially, the games and special effects industry heavily depend on realistic human animation. In this work a unified model that describes both, human pose and body shape is introduced which allows us to accurately model muscle deformations not only as a function of pose but also dependent on the physique of the subject. Coupled with the models ability to generate arbitrary human body shapes, it severely simplifies the generation of highly realistic character animations. A learning based approach is trained on approximately 550 full body 3D laser scans taken of 114 subjects. Scan registration is performed using a non-rigid deformation technique. Then, a rotation invariant encoding of the acquired exemplars permits the computation of a statistical model that simultaneously encodes pose and body shape. Finally, morphing or generating meshes according to several constraints simultaneously can be achieved by training semantically meaningful regressors. Links:

|

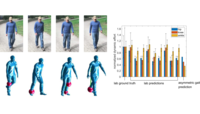

A system for articulated tracking incorporating a clothing modelMachine Vision and ApplicationsBodo Rosenhahn Uwe Kersting, Katie Powell, Abstract: In this paper an approach for motion capture of dressed people is presented. A cloth draping method is incorporated in a silhouette based motion capture system. This leads to a simultaneous estimation of pose, joint angles, cloth draping parameters and wind forces. An error functional is formalized to minimize the involved parameters simultaneously. This allows for reconstruction of the underlying kinematic structure, even though it is covered with fabrics. Finally, a quantitative error analysis is performed. Pose results are compared with results obtained from a commercially available marker based tracking system. The deviations have a magnitude of three degrees which indicates a reasonably stable approach. Links:

|

Drift-free Tracking of Rigid and Articulated ObjectsIEEE Conference on Computer Vision and Pattern Recognition (CVPR 2008)Juergen Gall, Bodo Rosenhahn, Hans-Peter Seidel Abstract: Model-based 3D tracker estimate the position, rotation, and joint angles of a given model from video data of one or multiple cameras. They often rely on image features that are tracked over time but the accumulation of small errors results in a drift away from the target object. In this work, we address the drift problem for the challenging task of hu- man motion capture and tracking in the presence of multi- ple moving objects where the error accumulation becomes even more problematic due to occlusions. To this end, we propose an analysis-by-synthesis framework for articulated models. It combines the complementary concepts of patch- based and region-based matching to track both structured and homogeneous body parts. The performance of our method is demonstrated for rigid bodies, body parts, and full human bodies where the sequences contain fast move- ments, self-occlusions, multiple moving objects, and clutter. We also provide a quantitative error analysis and compari- son with other model-based approaches. Links:

|

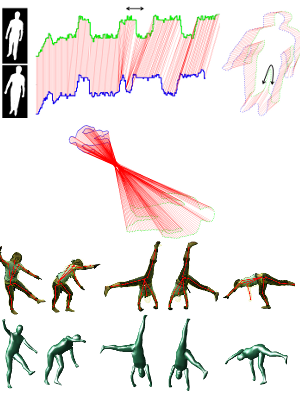

Scaled Motion Dynamics for Markerless Motion CaptureIEEE Conference on Computer Vision and Pattern Recognition (CVPR 2007)Bodo Rosenhahn, Thomas Brox and Hans-Peter Seidel Abstract: This work proposes a way to use a-priori knowledge on motion dynamics for markerless human motion capture (MoCap). Specifically, we match tracked motion patterns to training patterns in order to predict states in successive frames. Thereby, modeling the motion by means of twists al- lows for a proper scaling of the prior. Consequently, there is no need for training data of different frame rates or ve- locities. Moreover, the method allows to combine very dif- ferent motion patterns. Experiments in indoor and outdoor scenarios demonstrate the continuous tracking of familiar motion patterns in case of artificial frame drops or in situa- tions insufficiently constrained by the image data. Links:

|

Nonparametric Density Estimation with Adaptive, Anisotropic Kernels for Human Motion Tracking2nd. Workshop on Human MotionThomas Brox, Bodo Rosenhahn, Daniel Cremers and Hans-Peter Seidel Abstract: In this paper, we suggest to model priors on human motion by means of nonparametric kernel densities. Kernel densities avoid as- sumptions on the shape of the underlying distribution and let the data speak for themselves. In general, kernel density estimators suffer from the problem known as the curse of dimensionality, i.e., the amount of data required to cover the whole input space grows exponentially with the dimension of this space. In many applications, such as human mo- tion tracking, though, this problem turns out to be less severe, since the relevant data concentrate in a much smaller subspace than the original high-dimensional space. As we demonstrate in this paper, the concen- tration of human motion data on lower-dimensional manifolds, approves kernel density estimation as a transparent tool that is able to model priors on arbitrary mixtures of human motions. Further, we propose to support the ability of kernel estimators to capture distributions on low- dimensional manifolds by replacing the standard isotropic kernel by an adaptive, anisotropic one. Links:

|